Detecting and Eliminating Spam Events

Data quality is crucial for accurate decision making

Today’s age of data abundance and advance analytics has opened unlimited opportunities to enhance business procedures and develop evidence-based decision-making processes. Modern business practices apply state-of-the-art machine learning and data mining technologies to create a wide range of analysis applications such as demand forecasting, recommendation systems, and targeted marketing.

However, business and data analysis practitioners realize the art of evidence-based decision making is only as good as the data it’s derived from. For instance, imagine developing a demand forecasting model to optimize your logistic and pricing operations during important concerts and sports events. Similarly, if your application is designed to recommend events based on users preferences. The last thing you want is to feed spammy content to your forecasting models and recommendation systems.

As the world’s largest source of demand intelligence, PredictHQ understands the critical importance of providing trusted datasets by filtering out different types of irrelevant, fake and deceptive event listings.

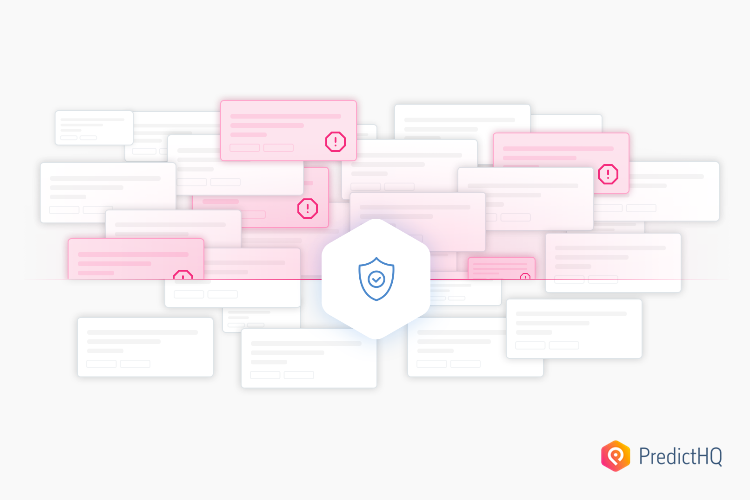

Our data quality analysis highlighted that event records can include a wide range of irrelevant “spam” events with potentially misleading metadata. For instance, fake event listings can include business advertisements, drug sales, test events, illegal video streaming and other types of malicious activity. Such spammy event records are often associated with a seemingly valid physical address and expected attendance metadata making them more difficult to detect.

Therefore, having reliable data quality systems and procedures is fundamental to ensure your data-driven decisions and forecasting models are all based on good events.

Automating spam detection

To help us achieve high-quality data standards, we have developed a comprehensive set of systems and tools to help us ingest, standardize, verify, enrich, monitor and filter a vast amount of raw data. Our issues handling system and data processing pipeline apply a variety of strategies to tackle spam and unwanted event records. For instance, we have developed several machine learning models to automatically detect and remove spam events and event records that don’t comply with our quality standards.

In addition to that, our team of data analysts continuously examine and look for new patterns of problematic and fraudulent events, so that we can develop new rules to further optimize our automated spam detection models.

A friction-free data experience

PredictHQ’s extensive data pre-processing eliminates the headaches of working with multiple data providers and dirty or unstructured data. The level of capability that we have developed for our data quality technology in terms of monitoring, verifying, enriching and fixing the various types of data quality issues such as tackling the challenges of spam filtering, is unique to the industry and allows our customers to have a friction-free data experience with our API. PredictHQ leads the way in data intelligence to ensure businesses using our data can focus on their core capabilities, and leave the heavy lifting to us instead.